HA Control Plane with kube-vip: No Load Balancer Needed

kube-vip needs the Kubernetes API server to run leader election. The API server is what kube-vip makes reachable. That circular dependency is the part most HA guides skip, and the part that will break your cluster on Kubernetes 1.29+ if you follow an older tutorial.

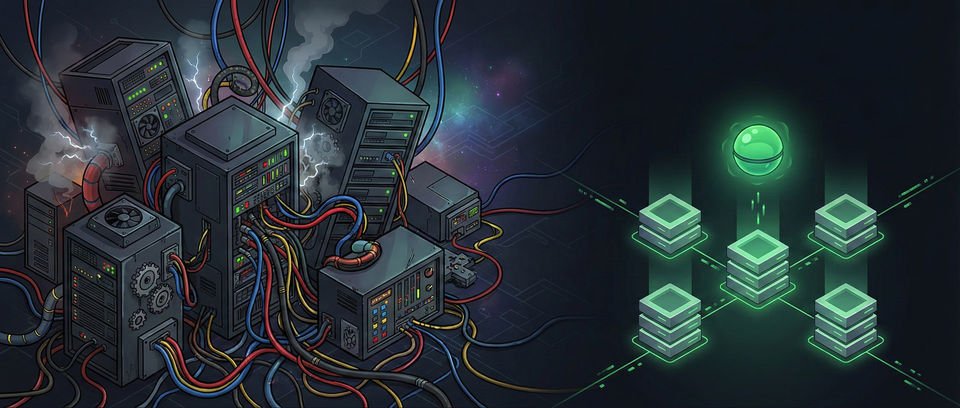

I run a 3-node HA control plane with kube-vip: one static pod manifest per node, zero external dependencies. No HAProxy, no keepalived, no cloud load balancer. This post covers the bootstrap chicken-and-egg problem, a breaking change in K8s 1.29 that most tutorials haven't caught, and real failover behavior from a running cluster.

Part 2 of the Building a Production-Grade Homelab series. Part 1 covered why kubeadm. This one covers the first infrastructure decision after that choice: making the control plane survive a node failure.

Why Not HAProxy and keepalived?

Three control plane nodes give you redundancy, but clients (kubelet, kubectl, Cilium) still need one stable endpoint that always reaches a healthy API server. The traditional approach:

| Component | Role | Config Files |

|---|---|---|

| HAProxy | Layer 4 load balancer across API servers | haproxy.cfg + static pod manifest |

| keepalived | VRRP-based VIP failover | keepalived.conf + health check script + static pod manifest |

Four files per node, two separate processes, a health check script that curls the API server. It works. Thousands of clusters run this way. But it's a lot of moving parts for "send traffic to a healthy API server."

kube-vip: One Static Pod, One VIP

kube-vip collapses HAProxy and keepalived into a single container that runs as a Kubernetes static pod. In ARP mode, it:

- Runs leader election using Kubernetes Lease objects

- The leader node binds the VIP to its network interface

- Broadcasts a gratuitous ARP packet so switches update their MAC tables

- If the leader fails, another node acquires the lease and takes over the VIP

No external processes. No config files to synchronize. The static pod manifest lives in /etc/kubernetes/manifests/ and kubelet manages its lifecycle. The --services flag in the manifest generator also enables LoadBalancer-type Service handling, so you get control plane HA and service load balancing from the same pod.

My Setup

Three Lenovo M80q mini-PCs on a flat L2 network:

k8s-cp1 10.10.30.11 ─┐

k8s-cp2 10.10.30.12 ─┤── VIP: 10.10.30.10 (api.k8s.rommelporras.com)

k8s-cp3 10.10.30.13 ─┘

All three nodes run the same kube-vip static pod. At any given moment, one node holds the VIP. The other two wait for their turn.

Chicken-and-Egg: kube-vip Needs the API Server It Protects

This is the part most kube-vip tutorials skip. And it's the part that will break your cluster if you don't understand it.

kube-vip uses Kubernetes Lease objects for leader election. Lease objects live in the API server. But the API server is what kube-vip is supposed to make reachable. So kube-vip needs the API server to function, but the API server needs kube-vip's VIP to be reachable by other nodes.

Circular dependency. On the first node, during kubeadm init, the bootstrap plays out like this:

- kubelet starts kube-vip (static pod) before

kubeadm initruns - kube-vip tries to acquire a Lease, but the API server doesn't exist yet

- kube-vip crashes with "connection refused"

- kubelet restarts kube-vip (this is normal static pod behavior)

kubeadm initstarts the API server on the same node- kube-vip connects to the local API server, acquires the Lease, binds the VIP

- Now the VIP is live, and other nodes can reach the API server through it

Steps 2-4 repeat until step 5 happens. On my cluster, kube-vip accumulated 10-19 restarts per node during bootstrapping. That's expected. Don't panic when you see RESTARTS: 17 on a kube-vip pod. Check the logs: if the last few entries show successful lease renewal, it's healthy.

The hostAliases Trick

There's a subtle detail in step 6. kube-vip mounts /etc/kubernetes/admin.conf as its kubeconfig. That file points to https://kubernetes:6443. If kube-vip resolved "kubernetes" through DNS or the service IP, it would try to reach the API server through the VIP, the VIP it hasn't bound yet.

The generated manifest includes a hostAliases entry that maps kubernetes to 127.0.0.1. This forces kube-vip to talk to the local API server on the same node, bypassing the VIP entirely. No circular dependency.

# From the generated kube-vip manifest

hostAliases:

- hostnames:

- kubernetes

ip: 127.0.0.1

Small detail. Critical for understanding why kube-vip works at all during bootstrap.

Breaking Change in K8s 1.29+

If you're running Kubernetes 1.29 or newer (my cluster runs 1.35.0), there's a breaking change that most tutorials haven't caught up with.

Before 1.29, admin.conf had full cluster-admin permissions from the moment kubeadm init created it. kube-vip could mount it and immediately interact with the API server for leader election.

Starting in 1.29, admin.conf permissions are restricted during the bootstrap phase. The RBAC bindings that grant cluster-admin aren't fully reconciled until after the control plane is running. kube-vip mounts admin.conf, tries to create a Lease, gets Forbidden, and crashloops.

The fix: mount super-admin.conf instead. This file retains full permissions during bootstrap. But you only change the host path, not the mount path inside the container. kube-vip still expects to find its kubeconfig at /etc/kubernetes/admin.conf inside the pod.

# Before workaround (won't work on K8s 1.29+)

volumes:

- hostPath:

path: /etc/kubernetes/admin.conf # ← restricted permissions during bootstrap

name: kubeconfig

# After workaround

volumes:

- hostPath:

path: /etc/kubernetes/super-admin.conf # ← full permissions for bootstrap

name: kubeconfig

# mountPath stays the same in both cases:

volumeMounts:

- mountPath: /etc/kubernetes/admin.conf # ← kube-vip reads from here inside the container

name: kubeconfig

My Ansible playbook (02-kube-vip.yml) applies this automatically:

- name: Apply K8s 1.29+ workaround (hostPath to super-admin.conf)

ansible.builtin.replace:

path: /etc/kubernetes/manifests/kube-vip.yaml

regexp: '(hostPath:\n\s+path:) /etc/kubernetes/admin\.conf'

replace: '\1 /etc/kubernetes/super-admin.conf'

The kubeadm HA documentation confirms this workaround, but it's buried in a note that's easy to miss. If you follow a pre-2024 kube-vip tutorial on Kubernetes 1.29+, your kube-vip pod will crashloop with RBAC errors. Now you know why.

Revert After Init

Once kubeadm init completes, admin.conf gets its proper RBAC bindings. At that point, you should revert the hostPath back to admin.conf. Running with super-admin.conf in production is a real security risk: if someone compromises the node, the mounted kubeconfig gives them unrestricted cluster access, bypassing all RBAC.

Playbook 03-init-cluster.yml handles this automatically after init succeeds:

- name: Revert kube-vip to use admin.conf

ansible.builtin.replace:

path: /etc/kubernetes/manifests/kube-vip.yaml

regexp: 'path: /etc/kubernetes/super-admin\.conf'

replace: 'path: /etc/kubernetes/admin.conf'

Two playbooks. One applies the workaround, the next reverts it. Automated, idempotent, no manual steps to forget.

Bootstrap: The Details That Matter

Rather than walking through every ctr and scp command (the Ansible playbooks handle that), here are the three details that trip people up.

The kubeadm config must set controlPlaneEndpoint to the VIP:

apiVersion: kubeadm.k8s.io/v1beta4

kind: ClusterConfiguration

kubernetesVersion: "v1.35.0"

controlPlaneEndpoint: "api.k8s.rommelporras.com:6443"

networking:

podSubnet: "10.244.0.0/16"

serviceSubnet: "10.96.0.0/12"

apiServer:

certSANs:

- "api.k8s.rommelporras.com"

- "10.10.30.10"

- "10.10.30.11"

- "10.10.30.12"

- "10.10.30.13"

- "k8s-cp1"

- "k8s-cp2"

- "k8s-cp3"

The certSANs list must include the VIP address and all individual node IPs. Without these, TLS verification fails when clients connect through different paths. Miss one node IP and kubeadm join from that node fails with a certificate error.

kubeadm init runs with --skip-phases=addon/kube-proxy because Cilium replaces kube-proxy with eBPF in this stack (Part 3 covers why).

kubeadm join does not copy static pod manifests. After cp2 and cp3 join the cluster, you must copy the kube-vip manifest to their /etc/kubernetes/manifests/ directory. Skip this step and the VIP only fails over within the first node. When cp1 goes down, the VIP goes with it. This is another detail most guides gloss over.

Security: Dropping Capabilities

The generated kube-vip manifest includes a minimal security context:

securityContext:

capabilities:

add:

- NET_ADMIN

- NET_RAW

drop:

- ALL

kube-vip only needs NET_ADMIN (to bind the VIP to an interface) and NET_RAW (to send gratuitous ARP packets). It drops everything else. Many older guides run kube-vip as fully privileged (privileged: true), granting the container access to the entire host network stack, device files, and kernel modules. The current manifest generator does the right thing by default.

Verify after installation: run kubectl get pod kube-vip-<node> -n kube-system -o jsonpath='{.spec.containers[0].securityContext}' on each node and confirm NET_ADMIN and NET_RAW are the only added capabilities. If you see privileged: true instead, you're running an outdated manifest.

Failover: What Actually Happens

kube-vip's failover speed depends on Lease timing. The defaults are conservative: 15-second lease duration with 10-second renewal and 2-second retry. My cluster uses more aggressive values from the manifest generator:

| Parameter | Default | My Cluster |

|---|---|---|

| Lease duration | 15s | 5s |

| Renew deadline | 10s | 3s |

| Retry period | 2s | 1s |

With these settings, if the leader node goes down, another node acquires the VIP in roughly 5-10 seconds. The trade-off: more frequent API server calls for lease renewal. On a 3-node homelab, the extra load is negligible.

What happens during those 5-10 seconds? Running pods keep running. kubelet caches its last known state and continues managing containers. You can't run kubectl commands, and no new scheduling decisions happen, but existing workloads are unaffected. The control plane is unavailable, not the data plane. A 5-second API outage during a node reboot is invisible to end users hitting your services.

For comparison, keepalived with VRRP can fail over in under 3 seconds because it uses multicast packets at the network layer, no API server involved. If sub-second failover matters to you, keepalived is the better choice. For a homelab where 5 seconds of API unavailability is a non-event, kube-vip's simplicity wins.

My cluster has seen 3 lease transitions since deployment. Each one was a node reboot. The VIP moved to another node, workloads continued running, and I didn't notice until I checked the lease history.

# Check current VIP leader

$ kubectl get lease plndr-cp-lock -n kube-system -o jsonpath='{.spec.holderIdentity}'

k8s-cp3

# Check transition history

$ kubectl get lease plndr-cp-lock -n kube-system -o jsonpath='{.spec.leaseTransitions}'

3

Resource Footprint

kube-vip is negligible compared to other stack components. Unlike Cilium (60m CPU, 250MB RAM per node from Part 3), kube-vip is a single static pod that mostly sleeps between lease renewals. The overhead is measured in single-digit millicores and tens of megabytes. On a 3-node cluster with 16GB RAM per node, kube-vip doesn't register in resource planning.

The Comparison Table

For anyone evaluating HA options for a kubeadm homelab:

| kube-vip (ARP) | keepalived + HAProxy | External LB | DNS Round-Robin | |

|---|---|---|---|---|

| Components | 1 static pod | 2 processes + health script | Cloud/HW LB | DNS A records |

| Files to manage | 1 YAML | 4+ config files | Provider config | DNS zone |

| External deps | None | None | Cloud account ($) | DNS provider |

| Failover speed | 5-15s (Lease) | <3s (VRRP) | Provider-dependent | Minutes (DNS TTL) |

| Complexity | Low | High | Low (managed) | Low |

| Best for | Homelab, simple L2 | Low-latency requirements | Cloud clusters | Dev/test only |

kube-vip isn't the fastest. It's the simplest, with zero external dependencies. For a homelab, that's the right trade-off.

What I'd Change

Two things I'd do differently if I bootstrapped this cluster again.

Upgrade to v1.0.4. My cluster runs v1.0.3. Version 1.0.4, released January 2026, includes fixes for the leader election retry logic and optimistic lock conflicts. My cp3 node currently logs Failed to update lock errors every second, roughly 86,000 lines per day. The lease still renews and the VIP stays functional, but the log volume is significant and the unnecessary API server calls add up. v1.0.4 would eliminate this. Low-risk patch upgrade that I should have done already.

Scrape Prometheus metrics. kube-vip exposes metrics on port 2112 (prometheus_server: ":2112" in the manifest). I have a full Prometheus + Grafana stack running and I'm not scraping kube-vip. The useful metrics include kube_vip_leader_is_leader (binary, which node holds the VIP), lease acquisition duration, and ARP broadcast counts. A Grafana panel showing leader transitions over time would make failover events visible at a glance instead of requiring manual kubectl get lease commands.

What's Next

This post is the second in the "Building a Production-Grade Homelab" series:

- Why kubeadm Over k3s, RKE2, and Talos in 2026

- HA Control Plane with kube-vip: No Load Balancer Needed (you are here)

- Cilium Deep Dive: What Replacing kube-proxy Actually Means

- Gateway API vs Ingress: No Ingress Controller Needed

- Distributed Storage with Longhorn: 2 Replicas Are Enough

- The Modern Logging Stack: Loki + Alloy (Why Not Promtail)

- Alerting That Actually Wakes You Up: Discord, Email, and Dead Man's Switches

- Self-Hosted GitLab: CI/CD Without Cloud Vendor Lock-in

The next post covers Cilium, the CNI that makes kube-proxy obsolete. It's also the reason kubeadm init runs with --skip-phases=addon/kube-proxy in the bootstrap above. Once you have a HA control plane, the network layer is what makes it useful.

All code referenced in this post is from my homelab repository. The Ansible playbooks, Helm values, and manifests are the same ones running in production.