Cilium Deep Dive: What Replacing kube-proxy Actually Means

Every Kubernetes cluster runs kube-proxy. It's the component you never think about until you have 200 services and your iptables chains take seconds to update. Cilium replaces kube-proxy entirely with eBPF programs that run inside the Linux kernel, turning O(n) packet processing into O(1) hash lookups. This isn't theoretical. My 3-node homelab has been running without kube-proxy for months, and the difference shows up in ways I didn't expect.

This is Part 3 of the Building a Production-Grade Homelab series. Part 1 covered why kubeadm. Part 2 covered HA with kube-vip. Now we're replacing the default networking data plane.

What kube-proxy Actually Does

kube-proxy watches the Kubernetes API for Service and Endpoints objects, then programs the host's networking rules so pods can reach services. In its default iptables mode, that means generating iptables rules for every Service/Endpoint pair.

The problem is structural. When a packet arrives at a node, the kernel evaluates iptables rules sequentially until it finds a match. Add 200 services with 3 backends each, and you're looking at 600+ rules evaluated per packet. Every time a Service or Endpoint changes, kube-proxy flushes and rewrites the entire chain while holding an atomic lock on the kernel tables.

IPVS mode improves the lookup performance with hash tables, but it still relies on the kernel's conntrack subsystem for connection tracking. Under high throughput, conntrack table exhaustion leads to dropped packets. IPVS is a better implementation of the same architecture, not a different approach.

Neither mode provides any visibility into the traffic it routes. If a packet gets dropped, you're reaching for tcpdump.

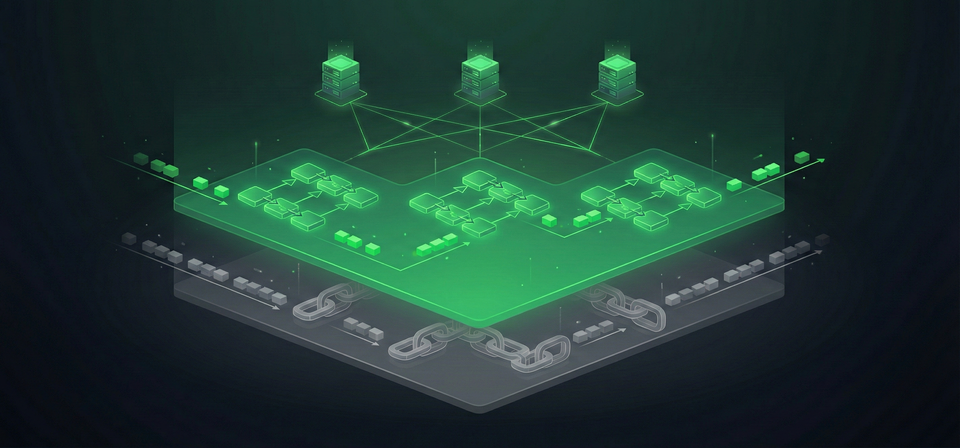

How Cilium eBPF Changes the Model

Cilium doesn't optimize iptables. It bypasses iptables entirely by attaching eBPF programs directly to the kernel's networking hooks. Service lookups happen through BPF hash maps instead of sequential rule chains.

| kube-proxy (iptables) | Cilium eBPF | |

|---|---|---|

| Service lookup | Sequential rule matching (O(n)) | Hash map lookup (O(1)) |

| East-west LB | Per-packet DNAT at prerouting | Socket-level at connect() |

| Rule updates | Flush and rewrite all chains | Atomic BPF map update |

| Observability | None | Hubble (L3/L4/L7 flows) |

| Masquerading | iptables MASQUERADE | eBPF (optional, bpf.masquerade) |

Socket-Level Load Balancing

This is the part most articles skip. For east-west traffic (pod-to-service within the cluster), Cilium intercepts at the connect() system call, before a packet even exists. When Pod A connects to a ClusterIP, Cilium's eBPF program translates the destination to the backend pod's real IP at the socket layer. The kernel then builds the packet with the correct destination from the start.

The result: no per-packet DNAT, no SNAT, no conntrack entries for intra-cluster traffic. The kernel sees direct pod-to-pod connections. On the homelab, the cilium_lb4_reverse_sk BPF map holds over 71,000 entries tracking socket-level translations for established connections.

North-South: XDP and Direct Server Return

For traffic entering the cluster from outside, Cilium can attach to XDP (eXpress Data Path) hooks on the network driver itself, processing packets before the kernel allocates socket buffers. With Direct Server Return (DSR), the backend pod responds directly to the client without routing back through the load balancer node. Maglev consistent hashing ensures backend changes affect minimal active connections.

The Homelab Configuration

Three Lenovo M80q nodes (i5-10400T, 16GB RAM) running Kubernetes v1.35.0 via kubeadm, with Cilium v1.18.6 as the CNI. The kernel is 6.8.0 (Ubuntu 24.04 HWE).

Step 1: Skip kube-proxy at Bootstrap

The cleanest way to replace kube-proxy is to never install it. During kubeadm init, the --skip-phases flag prevents the kube-proxy DaemonSet from being created:

kubeadm init \

--config=kubeadm-config.yaml \

--upload-certs \

--skip-phases=addon/kube-proxy

This is handled by Ansible playbook 03-init-cluster.yml. No kube-proxy pods, no leftover iptables rules, no conflict with Cilium.

Step 2: Install Cilium via Helm

The Ansible playbook 04-cilium.yml installs Cilium through Helm rather than the cilium install CLI. Helm provides version-pinned, reproducible installs and integrates with GitOps workflows.

The full values.yaml:

cluster:

name: homelab

operator:

replicas: 1

routingMode: tunnel

tunnelProtocol: vxlan

gatewayAPI:

enabled: true

kubeProxyReplacement: true

k8sServiceHost: "10.10.30.10" # kube-vip VIP

k8sServicePort: "6443"

l7Proxy: true

l2announcements:

enabled: true

externalIPs:

enabled: true

devices: "eno1"

A few values worth explaining:

k8sServiceHost: "10.10.30.10" points to the kube-vip floating VIP from Part 2. Without kube-proxy, Cilium can't discover the API server through the default in-cluster kubernetes Service. You have to tell it explicitly.

routingMode: tunnel uses VXLAN encapsulation for pod-to-pod traffic. This works on any flat L2 network without BGP or custom router configuration. Native routing would give better performance, but VXLAN is simpler to operate and debug.

devices: "eno1" tells Cilium which interface to use for L2 announcements. The Intel I219-LM NIC on the M80q nodes.

What's Not in the Values File (and Why It Matters)

The homelab verification revealed two settings that are not configured but probably should be:

bpf.masquerade: true would move pod-to-external SNAT from iptables into eBPF. Right now, Cilium replaces kube-proxy's service routing chains but still uses iptables for masquerading. The cilium status output confirms this: Masquerading: IPTables [IPv4: Enabled]. "Replacing kube-proxy" doesn't mean "removing all iptables." Worth understanding the distinction.

bpf.hostLegacyRouting: false would enable native eBPF host routing, bypassing the veth pair and host network stack for pod-to-host traffic. The cluster runs in Legacy host routing mode (Host: Legacy in status output). The kernel (6.8.0) supports native mode. This is a performance tuning opportunity I haven't needed yet on a 3-node cluster, but it's the kind of detail a deep-dive post should call out honestly.

L2 Announcements: Replacing MetalLB

On bare-metal clusters, LoadBalancer Services need something to advertise their IPs on the local network. MetalLB is the standard answer. Cilium's L2 Announcements feature does the same thing without an additional component.

The feature is Beta in Cilium 1.18.x, but it's been stable enough for production homelab use. Four LoadBalancer services have been running on it for weeks with zero issues.

Two custom resources configure it:

CiliumLoadBalancerIPPool defines the available IP range:

apiVersion: cilium.io/v2alpha1

kind: CiliumLoadBalancerIPPool

metadata:

name: homelab-pool

spec:

blocks:

- start: "10.10.30.20"

stop: "10.10.30.99"

CiliumL2AnnouncementPolicy controls which interfaces and node types respond to ARP requests:

apiVersion: cilium.io/v2alpha1

kind: CiliumL2AnnouncementPolicy

metadata:

name: homelab-l2

spec:

interfaces:

- ^eno.*

- ^eth.*

- ^enp.*

nodeSelector:

matchLabels:

kubernetes.io/os: linux

loadBalancerIPs: true

externalIPs: true

How It Works Under the Hood

Cilium uses Kubernetes Leases for per-service leader election. For each LoadBalancer IP, one node wins the lease and starts responding to ARP requests for that IP. If the node goes down, another node acquires the lease and takes over.

On the homelab, all four leases currently sit on the same node (k8s-cp2):

| Lease | Holder | Service IP |

|---|---|---|

| homelab-gateway | k8s-cp2 | 10.10.30.20 |

| gitlab-shell-lb | k8s-cp2 | 10.10.30.21 |

| otel-collector | k8s-cp2 | 10.10.30.22 |

| adguard-dns | k8s-cp2 | 10.10.30.53 |

This is by design. L2 networking is inherently Active/Standby per IP; only one node can own a VIP at a time. The fact that all four landed on the same node means if k8s-cp2 goes down, all four services fail over simultaneously. The recovery is automatic (another node acquires each lease), but there's a brief disruption window. BGP-based load balancing can spread VIPs across nodes, but requires router support that most home networks don't have.

What Cilium Replaces

The consolidation argument is real. The homelab runs one component where most clusters need three to five:

| Function | Traditional Stack | Homelab (Cilium) |

|---|---|---|

| CNI | Calico or Flannel | Cilium |

| Service proxy | kube-proxy | Cilium eBPF |

| Network policies | Calico | Cilium |

| LoadBalancer IPs | MetalLB | Cilium L2 Announcements |

| Ingress controller | NGINX or Traefik | Cilium Gateway API |

| Network observability | Prometheus exporters | Hubble |

Fewer components means fewer things to upgrade, fewer resource consumers, and fewer failure modes. The Gateway API integration (covered in detail in Part 4) serves 16 HTTPRoutes across 13 namespaces through a single gatewayClassName: cilium configuration.

Hubble: Observability Without Sidecars

Hubble reads flow data from the same eBPF programs that handle packet routing. No sidecar proxies, no packet capture, no additional CPU overhead. On the homelab, Hubble processes 212-303 flows per second from ~30 pods.

# See what's being dropped and why

hubble observe --verdict DROPPED

# Watch HTTP traffic to a specific service

hubble observe --pod grafana --protocol http

# All flows from a namespace

hubble observe --from-namespace monitoring

The practical value shows up during debugging. With kube-proxy, a dropped packet is silent. You don't know it happened unless the application reports a timeout. Hubble explicitly logs every drop with a reason: Policy denied, Stale endpoint, TCP flags invalid. That turns hours of tcpdump analysis into a single CLI command.

The default Hubble buffer holds 4,095 flows before older entries are evicted. At 300 flows/s, that's roughly 13 seconds of history. For deeper analysis, you'd increase hubble.bufferSize or enable Hubble metrics export to Prometheus.

Verifying the Replacement

After installation, verify that kube-proxy is truly gone and Cilium owns the data plane:

# No kube-proxy pods or DaemonSet

kubectl get pods -n kube-system -l k8s-app=kube-proxy

# (empty)

# No iptables service chains

sudo iptables-save | grep KUBE-SVC

# (empty)

# Cilium confirms replacement

cilium status

# KubeProxyReplacement: True [eno1, Direct Routing]

The eBPF maps tell the rest of the story:

| BPF Map | Entries | Purpose |

|---|---|---|

cilium_lb4_services_v2 |

200 | All services (ClusterIP + NodePort + LB) |

cilium_lb4_backends_v3 |

87 | Backend pod addresses |

cilium_lb4_reverse_sk |

71,312 | Socket reverse lookup for established connections |

cilium_ipcache_v2 |

100 | IP-to-identity mapping |

cilium_lxc |

26 | Local endpoints on this node |

The 200 service entries in the cilium_lb4_services_v2 map are what kube-proxy would have rendered as 200+ iptables chains. In Cilium, they're hash table entries with O(1) lookup time. The cilium_lb4_reverse_sk map with 71,312 entries is particularly interesting: each entry represents a socket cookie for an established connection, enabling Cilium to bypass NAT entirely for ongoing traffic.

Resource Cost

Cilium is not free. Each node runs a Cilium agent pod:

| Node | CPU | Memory | Uptime |

|---|---|---|---|

| k8s-cp1 | 60m | 183Mi | 13d |

| k8s-cp2 | 54m | 261Mi | 13d |

| k8s-cp3 | 66m | 284Mi | 17d |

That's roughly 5-6% of a single core and 200-300MB of RAM per node. The operator pod adds negligible overhead. For a 3-node cluster with 16GB RAM per node, this is comfortable. Zero restarts across all pods over extended uptime.

154 internal controllers are running healthy. 13 modules are stopped (disabled features: encryption, BIG TCP, bandwidth manager, SRv6, host firewall). One module reports degraded status, likely related to TLSRoute CRD expectations in the operator logs (Cilium 1.18.x looks for experimental Gateway API CRDs even when only standard CRDs are installed).

Version Compatibility

Cilium 1.18.x officially supports Kubernetes 1.30 through 1.33. The homelab runs K8s 1.35.0, which is outside the official matrix. It works. Zero restarts, 154/154 controllers healthy, all features operational.

Version matrices are conservative by necessity. Upstream Kubernetes maintains backward compatibility for API versions across several minor releases. The practical risk of running Cilium 1.18.x on K8s 1.35 is low, but it means you're responsible for catching compatibility issues yourself rather than relying on the vendor's CI matrix.

The operator logs do show v1 Endpoints is deprecated in v1.33+ warnings. Cilium 1.18.x still uses v1 Endpoints alongside EndpointSlice. Not a functional problem, but expect the next major Cilium release to drop v1 Endpoints support.

The Bigger Picture

Kubernetes itself is moving in this direction. KEP 4004 deprecated status.nodeInfo.kubeProxyVersion in K8s 1.31, acknowledging that kube-proxy may not be present. An nftables mode for kube-proxy landed as Beta in K8s 1.31, providing a modernized backend for environments that can't run eBPF, but not changing the fundamental architecture.

GKE Dataplane V2, AWS EKS Anywhere, and Azure CNI all use Cilium under the hood. It's one of the fastest-growing CNCF graduated projects, with over 506,000 contributions and 4,400+ individual contributors.

For the homelab, the argument is simpler. Cilium replaces kube-proxy, MetalLB, and a separate ingress controller with one component. Hubble gives you network observability that would otherwise require a service mesh. The eBPF data plane handles 200 services with the same lookup time it would handle 20,000. And the whole thing runs on 60m CPU and 250MB RAM per node.

What's Next

This post is the third in the "Building a Production-Grade Homelab" series:

- Why kubeadm Over k3s, RKE2, and Talos

- HA Control Plane with kube-vip

- Cilium Deep Dive: eBPF Networking (you are here)

- Gateway API vs Ingress: No Ingress Controller Needed

- Distributed Storage with Longhorn: 2 Replicas Are Enough

- The Modern Logging Stack: Loki + Alloy

- Alerting That Actually Works: Discord, Email, and Dead Man's Switches

- Self-Hosted GitLab: CI/CD Without Cloud Vendor Lock-in

Part 4 covers the 16 HTTPRoutes the homelab serves through Cilium's native GatewayClass, and why the ingress-nginx controller's retirement makes Gateway API the clear successor.